Uncritical support for these AI bros refusing to learn CS and thereby making the CS nerds that actually know stuff more employable.

But to recognize people who know something you too need to know something, but techbros are very often bazingabrained AI-worshippers.

Unfortunately, I am experiencing the opposite effect. I am an IT contractor tasked with writing scripts, and I keep applying for dev and IT jobs with a 2 year degree with no success for the past few years while I see old dinosaur fucks at my job not even knowing what functions are. They use ChatGPT to write scripts for them without any modifications to work with our specific clusterfuck of an environment that they created, and the scripts are broken and run in production because we don’t even have a testing environment. Meanwhile I have to clean their mess and get paid much less than them, let alone not have any PTO or benefits. It’s absolutely maddening to be moderately skilled in programming and witnessing some of the dumbest people on the planet get CS jobs through nepotism or impressing HR, moreso the former. It seems like my workplace will only hire someone if and only if they are incredibly incompetent.

And now the team of fucking morons are taking care of packaging, so the one thing in my job that gave me control to fix things and helped with my sanity is being stripped away from me, which means I get to image laptops unsuccessfully because their scripts will not work. I might as well bring a personal laptop to work and practice programming since I am going to be sitting around waiting shit to get fixed for weeks at a time. Can’t wait to get the fuck out of the IT field and pursue electrical engineering someday. I’m not wasting 10-20 years of my life just to get a single promotion in a field dominated by cop-worshipping, white supremacist libertarians.

Wish I could use a real programming language in a sane environment where I am hired to actually be a developer and not an IT person with 3 odd jobs that don’t help me build any transferrable experience and get paid for less than one for once.

Honestly, how is it possible to unionize white-ass techbros and old techfucks when they don’t hold themselves accountable, work against each other, refuse to keep up to date in their field or learning anything new, and scream fire for non-issues so that other teams have to struggle, let alone most of techbros being against unions or anything remotely left of Hitler?

Absolutely, IT and tech stuff is full of failing up. And it is exacerbsted by generational knowledge gaps that put vaguely millennial-aged people in the “sweet spot” for competent debugging and CS knowledge. The older generations that did not need to update their skills retained positions where those skills had been needed, passing them to a younger generation so they could join a massively incompetent managerial class. And zoomers etc never had to fight much with technical issues so they went straight to frameworks and web stuff.

Obviously there are exceptions in all cases, but this tendency has lead to a situation where the Boomer/Gen X managerial class does the incompetent things you mention, with everything working only because one or two people under them put out the fires (usually but not always in the millennial range) and when younger still people get hired to replace them (I.e. pay people less) the whole thing explodes and management does its best to fail upwards again while everyone else gets to be unemployed for a while. This can be put off for a while by accidentally hiring competent zoomers that inevitably get sick of that shot and get a different job.

My comment is really just joking about a silly extreme of this, which is CS students failing to learn basic CS because they think that AI solves everything for them. AI basically becomes the nerd they pay to do their homework poorly and then they will get a job, gear someone sat, “this is all built on linked lists” and have no idea what anyone is talking about despite dropping $130k on a degree that covered that topic a bunch of times.

PS sorry you have to be in that environment, it sounds very frustrating. Nothing worse than being responsible to fix something that the higher-up decision-makers break. In my experience they even blame you for not fixing it fast enough or preventing the problem in the first place even though they forced the decision despite your objections. Scapegoating is rewarded in these corporate environments, it is how the nepotism fail-children protect their petty fiefdoms.

How do old techbros not know what a function is do they code entirely in Hex or something?

My workplace for some reason wants 20 years of IT manager experience for dev jobs, and many of the incompetent higher-ups were promoted from IT despite having no experience, especially outside experience from any other company, which is why they make the stupidest decisions. Management is also equally incompetent. The particular software team that handles patches is led by a shitty manager that creates a terrible culture that seems to make even decent people become incompetent, like some brain worm. I worked with another contractor in my team that was hired for IT Networking with literally no experience. I haven’t had the same luck, probably because I don’t fit in with their culture.

Wait, is this not a joke?

deleted by creator

“INTEGER SIZE DEPENDS ON ARCHITECTURE!”

“INTEGER SIZE DEPENDS ON ARCHITECTURE!”That will also be deduced by AI

idk why boomers decided to call integers int/short/word/double word/long/long long

you ever heard of numbers? you think maybe a number might be a little more descriptive than playing “guess how wide i am?”

yet another thing boomers ruined

deleted by creator

still is.

People took the math “they have played us for absolute fools” meme seriously.

Found javascript’s burner account.

nah im talking about stdint finally giving us

int8_t,int16_t,int32_tandint64_t

This is simply revolutionary. I think once OpenAI adopts this in their own codebase and all queries to ChatGPT cause millions of recursive queries to ChatGPT, we will finally reach the singularity.

There was a paper about improving llm arithmetic a while back (spoiler: its accuracy outside of the training set is… less than 100%) and I was giggling at the thought of AI getting worse for the unexpected reason that it uses an llm for matrix multiplication.

Yeah lol this is a weakness of LLMs that’s been very apparent since their inception. I have to wonder how different they’d be if they did have the capacity to stop using the LLM as the output for a second, switched to a deterministic algorithm to handle anything logical or arithmetical, then fed that back to the LLM.

I’m pretty sure some of the newer ChatGPT-like products (the consumer-facing interface, not the raw LLM) do in fact do this. They try to detect certain types of inputs (i.e. math problems or requesting the current weather) and convert it to an API request to some other service and return the result instead of a LLM output. Frankly it comes across to me as an attempt to make the “AI” seem smarter than it really is by covering up its weaknesses.

I think chatgpt passes mathematical input to Wolfram alpha

Yeah, Siri has been capable of doing that for a long time, but my actual hope would be that moreso than handing the user the API response, the LLM could actually keep operating on that response and do more with it, composing several API calls. But that’s probably prohibitively expensive to train since you’d have to do it billions of times to get the plagiarism machine to learn how to delegate work to an API properly.

bit idea: the singularity but the singularity just crushes us with the colossal pressure past the event horizon of a black hole.

modern CS is taking a perfectly functional algorithm and making it a million times slower for no reason

inventing more and more creative ways to burn excess cpu cycles for the demiurge

Given a small allocation can take only a few hundred cycles, and this would take at minimum a few seconds, it’s probably between a billion and a trillion times slower.

mallocPlusAI

That made me think of…

molochPlusAI("Load human sacrifice baby for tokens")I’d just like to interject for a moment. What you’re refering to as molochPlusAI, is in fact, GNU/molochPlusAI, or as I’ve recently taken to calling it, GNUplusMolochPlusAI.

GNUplusMolochPlusAI!

GNUplusMolochPlusAI!

GNUplusMolochPlusAI!Now is the time to dance!

GNUplusMolochPlusAI!

GNUplusMolochPlusAI!

GNUplusMolochPlusAI!-–

To a drum and bass beat

GNUplusMolochPlusAIplusDrumPlusBassPlusBeat

loop-loop-loop-loop-loop-loop-loop-loop-loop-loop-loop-loop-loop

Id legit listen to this.

Can we make a simulation of a CPU by replacing each transistor with an LLM instance?

Sure it’ll take the entire world’s energy output but it’ll be bazinga af

why do addition when you can simply do 400 billion multiply accumulates

lets add full seconds of latency to malloc with a non-determinate result this is a great amazing awesome idea it’s not like we measure the processing speeds of computers in gigahertz or anything

sorry every element of this application is going to have to query a third party server that might literally just undershoot it and now we have an overflow issue oops oops oops woops oh no oh fuck

want to run an application? better have internet fucko, the idea guys have to burn down the amazon rainforest to puzzle out the answer to the question of the meaning of life, the universe, and everything: how many bits does a 32-bit integer need to have

new memory leak just dropped–the geepeetee says the persistent element ‘close button’ needs a terabyte of RAM to render, the linear algebra homunculus said so, so we’re crashing your computer, you fucking nerd

the way I kinda know this is the product of C-Suite and not a low-level software engineer is that the syntax is mallocPlusAI and not aimalloc or gptmalloc or llmalloc.

and it’s malloc, why are we doing this for things we’re ultimately just putting on the heap? overshoot a little–if you don’t know already, it’s not going to be perfect no matter what. if you’re going to be this annoying about memory (which is not a bad thing) learn rust dipshit. they made a whole language about it

if you’re going to be this annoying about memory (which is not a bad thing) learn rust dipshit. they made a whole language about it

holy fuck that’s so good

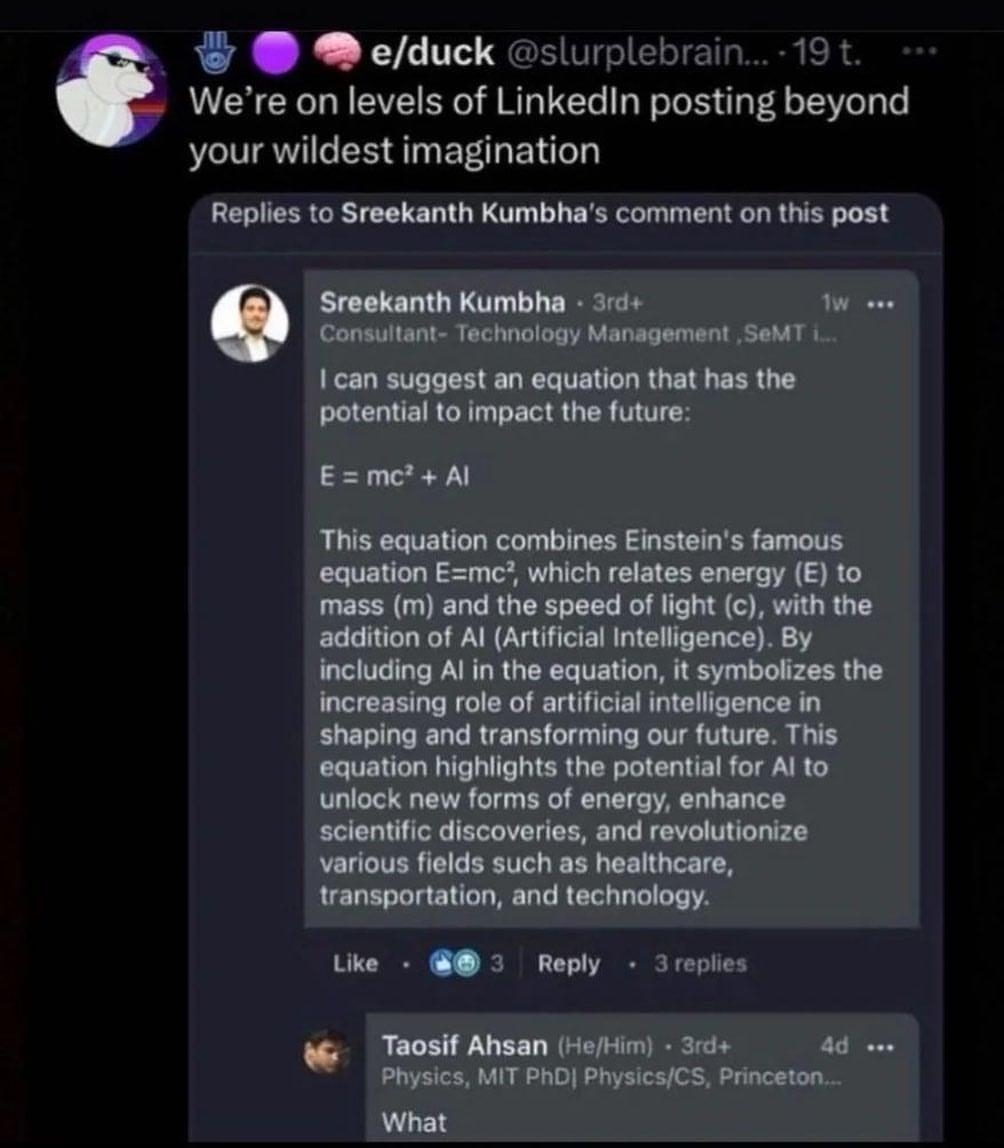

wait is this just the e = mc2 + AI bit repackaged

I think this is the first solo

I’ve seen and it’s beautiful

I’ve seen and it’s beautiful

t the syntax is mallocPlusAI and not aimalloc or gptmalloc or

if they’re proposing it as a C stdlib-adjacent method (given they’re saying it should be an alternative to malloc [memory allocate]) it absolutely should be lowercase. plus is redundant because you just append the extra functionality to the name by concatenating it to the original name. mallocai [memory allocate ai] feels wrong, so ai should be first.

if this method idea wasn’t an abomination in and of itself that’s how it would probably be named. it currently looks straight out of Java. and at that point why are we abbreviating malloc. why not go the distance and say largeLanguageModelQueryingMemoryAllocator

I might call it like, malloc_ai so it’s like, I INVOKE MALACHI lol

there it is, the dumbest thing i’ll see today, probably.

oh this is just gold

Please be a bit, please be a bit

Wait this makes sense

AI is always 0

Oh that’s so dumb. So dumb.

Society is 12 hours of internet outage away from chaos.

Coming soon to Netflix?

Chaos Day (2025)

Tagline: 12 hours without the netPlease Iran, detonate an EMP over the US

this is definitely better than having to learn the number of bytes your implementation uses to store an integer and doing some multiplication by five.

sizeof()

whoa, whoa. this is getting complicated!

There’s no way the post image isn’t joking right? It’s literally a template.

edit:

Type * arrayPointer = malloc(numItems * sizeof(Type)):Yeah lol it does seem a ton of people ITT missed the joke. Understandably since the AI people are… a lot.

who even has time to learn about bytes and bits and bobbles? this is 2024 gotdamit!

deleted by creator

I wrote a program that kills the Amazon

This right here is giving me flashbacks of working with the dumbest people in existence in college because I thought I was too dumb for CS and defected to Comp Info Systems.

One of the things I’ve noticed is that there are people who earnestly take up CS as something they’re interested in, but every time tech booms there’s a sudden influx of people who would be B- marketing/business majors coming into computer science. Some of them even do ok, but holy shit do they say the most “I am trying to sell something and will make stuff up” things.

Chatgeepeetee please solve the halting problem for me.

I switched degrees out of CS because of shit like this. The final straw was having to write code with pencil and paper on exams. I’m probably happier than I would’ve been making six figures at some bullshit IT job (

)

)Honestly I like writing code on paper vs. actual code on a computer. But that just means I should have majored in math.

if it makes u feel better i graduated out of CS and can’t find a job bc the field is so oversaturated

I had to do that once. That was pre ChatGPT tho so I can imagine it’s worse because the rampant cheating.

I had to do it too (before and after LLM era) but it’s not really necessary ever. If they want us not to use ChatGPT they can make us take exams in a computer lab with supervision. For some reason, one or two profs refuse to do that and still hand out exams on paper where you gotta write entire scripts. Deeply unserious field.

I was pre ChatGPT also. Still do writing for my current job but I’m too much of a boomer to trust it with anything.