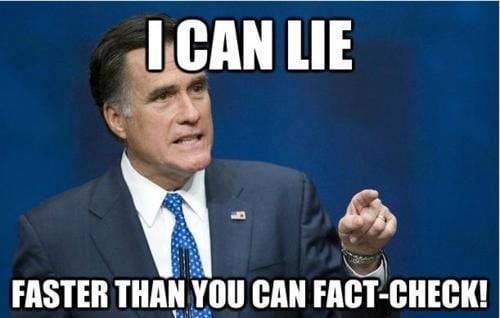

Also knowns as the Bullshit Asymmetry Principle

As another commentator says, pointing that a statement is bullshit is sufficient. The burden the burden of the proof is on the shitter, for having contrary opinion

Except that people readily believe the lie and liar, so you need to dispute it with data, and all the liar has to do is go “nuh uh!”. They’ll still influence people with zero effort.

In a fact based discussion sure, but bullshitting has other purposes: muddying the waters, creating distrust of media, simply drowning out non-bullshit.

Kamala did this the during the debate and it worked much better than I expected. She was basically like “yeah everything this guy just said is bullshit. Anyway, here’s what I’m going to do.”

Never argue with idiots. They drag you down to their level and beat you with experience.

-Wayne Gretzky

I won’t waste energy on refuting this bullshit.

-Michael Scott

“A lie will fly around the whole world while the truth is getting its boots on.”

--Mark Twain

If AI ever gets to the point where it can fact check in real time (with actual sources), it will completely change society. Unfortunately, it’s currently on the other side of the problem.

If anything, it demonstrates that the law has mathematical validity. Fact-checking simply requires more work than making shit up. Even when AI gets to the point where it can do research and fact-check things effectively (which is bound to happen eventually), it’ll still be able to produce bullshit in a fraction of that time, and use that research ability to create more convincing bullshit.

Fact-checking requires rigor. Bullshit does not. There’s no magic way to close that gap.

However, most social media sites already implement rate limits on user submissions, so it might actually be possible to fact-check people’s posts faster than they are allowed to make them.

Yeah, the AI stuff is suffering from this right now.

It has generated the best bullshit it can, and is now unable to differentiate it from the human created content it uses to learn from.

It’s because AI is still stuck in the mimic phase. Once we figure out how to actually get it to learn in a structured manner, that’s the birth of the singularity. I don’t think current tech, even if taken to the extreme will get us there though. Needs to be something new, some different approach. Like how we went from faster and faster single core processes to multi core ones.

Maybe we just dedicate a nuclear power plant to running AI, and wait for some type of accident to boost some evolution…

by just how AI works it’ll never solve the problem. what we really need is big brother to tell us what’s right and what’s wrong

Brandolini’s Law is great to keep in mind when discussing online - because as you’re busy refuting a piece of bullshit, the bullshitter is pumping out nine other bullshits in its place, so discussing with obvious bullshitters is a lost cause.

On a lighter side pointing the bullshit out is considerably easier/faster than to refute it, but still useful - as whoever is reading the discussion will notice it. As such, when you see clear signs of bullshit*, a good strategy is to point it out and then explicitly disengage.

*such as distorting what others say, assuming, using certain obvious fallacies/stupidities, screeching when someone points out a fallacy, etc.

It can be very useful to pick just one element of a multi-part bullshit firework and refute the shit out of it, and then completely tune out the rest.

Sometimes even just the quality of thinking comes across and does some work.

They’ll either just go silent or change the subject. They never update their original bullshit, or admit they were wrong. Prove them wrong on one subject and they’ll go all buttery males, or hunterbidenlaptop on you.

We use words seriously, to convey facts and and truths.

They use words as toys to infuriate and offend, all the while taking amusement from the collective effort to stop their disinformation and lies.

Most Bullshiters just copy talking points… they rarely defend them, just spew new. It’s like talking to ChatGPT to persuade it… it’s futile because the “source data” won’t be updated.

This is why bullshitters hate Ai and think it’s biased towards Liberal/Woke Ideology. Spoiler: It’s not, it’s mostly the average of all people which is: Live and let Live. (Idiotic to think about this “don’t thread on me” synonym as woke or liberal, but here we are).

AI isn’t really the average of all people. It’s more like the average of all people on Reddit and other similar sources, so it does skew left. Microsoft took great care to eliminate hostile data from their training pool to avoid another Tay disaster.

Everyone is skewed left, even Nazis, when it’s about their own life. It’s what they want for others that’s “right”… at least that is my impression.

(Wasn’t the Microsoft Ai trolled by 4-chan?)

See also: the Gish gallop. https://en.m.wikipedia.org/wiki/Gish_gallop

“The sky is green!” - Bullshit

“Nu-uh!” - Energyless refutation

What bullshit is this?

Vance sure does love fucking couches!

There’s also the closely related Gish gallop

The favorite weapon of several politicians and creationists

And C-Suites across the globe.

Alas, we cannot afford to keep everyone on-board despite posting the highest profits in the history of the company. So, we will be laying off 1000 people who made those profits possible. In a completely unrelated announcement, our CEO will be receiving a fifty million dollar bonus this year."

I have a few things in my reading backlog about bullshit. I think that it tends to be trivialized in social discurse. It honestly feels like the patterns of bullshit exploit built in biases we have.

This is my future starting point for when I leave some room to this topic: https://en.wikipedia.org/wiki/On_Bullshit

Wrong. That’s Brannigan’s law.

I don’t pretend to understand Brannigan’s Law. I merely enforce it.

- Zapp Brannigan

Ahh every far-righter ever