Helpful Resources

I’ll add more here as I remember them. Feel free to add more in the comments.

- AUTOMATIC1111’s Stable Diffusion WebUI is the software nearly everybody uses

- System requirements wise, for a while I used it on a 1050Ti with 4GBs of VRAM. I wouldn’t recommend going any lower than that. An RX580 with 8GB VRAM does wonders at a similar secondhand price point (if there isn’t any crypto hype going around where you are)

- Using https://github.com/pkuliyi2015/multidiffusion-upscaler-for-automatic1111 can provide a really nice speed boost if configured correctly.

- Civitai has a really good selection of models, loras, and other resources

Models

Models are basically the brains of Stable Diffusion. They are the data SD uses to learn what your prompts mean.

The built-in models that come with Stable Diffusion are really bad for porn. Don’t use them. In fact don’t use them at all unless you’re training your own models, there are better SFW models.

Here are some of my personal favourites:

Anime

- MeinaHentai is a great model to start with. Compared to other models it’s really easy to prompt

- AOM3 also does really well, though it might be a little more difficult to guide

For all of those, I recommend installing https://github.com/DominikDoom/a1111-sd-webui-tagcomplete, as they heavily rely on danbooru tags.

- Berry Mix (Pre-mixed version here) can also work pretty well, depending on what you want to do. AFAIK it uses rule34 tags instead of danbooru, so it probably won’t work all too well with prompts used for the above ones

Realistic

- Uber Realistic Porn Merge is the only realistic model I know of that does hardcore stuff. It’s unfortunate problem is that it’s REALLY DAMN HARD TO USE

VAEs

VAEs are mostly used for finetuning colors, sharpness, what have you. Some models come with a VAE builtin, but for ones that don’t, it’s recommended to have one on hand.

- “Anything VAE”, “Orangemix VAE”, and “NAI Leak VAE” are the same exact thing under different names. If you already have one on hand, don’t bother with the others. Most VAEs are renamed versions or modifications of this one.

- Waifu Diffusion’s kl-f8-anime2 is also a pretty good one. It doesn’t require Waifu Diffusion.

- The one that comes with Stable Diffusion is the only one that seems to work for realistic stuff.

LoRAs

LoRAs teach models about concepts (characters, clothing, environments, style, …) they might not know about. There are a LOT of them, so feel free to browse Civitai to find ones you might want.

LoRAs tend to be specific for families of models, or at the very least styles (using anime LoRAs on realistic models tend to be a bad idea), but there are a fair few that will work across the board.

Locon and LyCORIS are newer formats of LoRAs. Not sure on the technical differences between them, but they will not work out of the box and need an extension such as https://github.com/KohakuBlueleaf/a1111-sd-webui-lycoris to get working

Textual Inversions / Embeddings and Hypernetworks

These are mostly obsoleted by LoRAs. There are a few embeddings such as Deep Negative and EasyNegative that are still quite useful, but in most cases you’ll want to use LoRAs instead.

I find Automatic1111’s WebUI to be pretty confusing and difficult to work with. I’ve switched to Easy Diffusion and I love it waaay more. It’s also a 1-click install program, all dependencies are already included

Is it just me or do all the AI generated porn images have something in common that makes them immediately recognizable? I am not quite sure what exactly it is but they do all have some quality in common.

Its the uncanny valley effect I think. Your brain recognizes that something is wrong in the image.

That might be part of it but I think there is also a sort of sameness, certain aspects that are always the same in the generated images and more varied in real ones. Possibly related to the averaging of all the input data.

Are these softwares all free to download/use? Also, how does one start doing this? do i just need the WebUi or do i need extra files to feed into it and stuff?

I recommend following a setup tutorial on YouTube. They usually link to models and stuff youll need. Look for a recent one, this stuff evolves a lot.

I just figured it out tonight playing around with the links and readmes available above. If you get stuck I can try to answer more specific questions.

Hmm ill probably have mroe questions but for now im curious:

- how much space was the download(s)?

- how confusing is the software to use?

- what kind of limitations does the software have? can i do multiple people? monsters? futa? etc.

Thanks for your help! :)

Sure,

1: The initial download was pretty small ~10GB. But with the models, lora(s), extensions, ect. I’m up to ~60GB.

2: The guide at the top was pretty easy to follow. Install the dependencies, then install the UI. Launch and run. There is a bit of a learning curve with all of the options but so far it hasn’t been too confusing.

3: That’s where the extra models/lora(s) come in. Various models are trained in different styles, poses, actions, ect. The lora files are smaller things, like poses. IE: Cowgirl is it’s own lora file that tells the model how to use the prompts you give.

how much space was the download(s)?

On my end, it’s sitting at ~64GB (with btrfs compression shenanigans), though 60 of those are from all the models I have installed. The download would probably be ~2GB, even less if you disable downloading the “default” models with

--no-download-sd-modeland instead pick models off of Civit or wherever manually.Edit: Should have mentioned. Most full models are between 2-4 GBs each. Some can be 5+ but they tend to be “full” versions intended for merging & such. LoRAs are generally smaller. Depending on how much they’re pruned they’ll be anywhere between 10-100 MBs each.

how confusing is the software to use?

There’s definitely a learning curve, yes. But there’s plenty of resources (and more importantly, examples) out there.

what kind of limitations does the software have? can i do multiple people? monsters? futa? etc.

As long as you have the correct models set up it can generate basically anything. At least with anime models, monsters and futa are a given. Your main issue will probably be multiple people, although there are solutions to that. (See the multidiffusion upscaler GitHub repo on the main post)

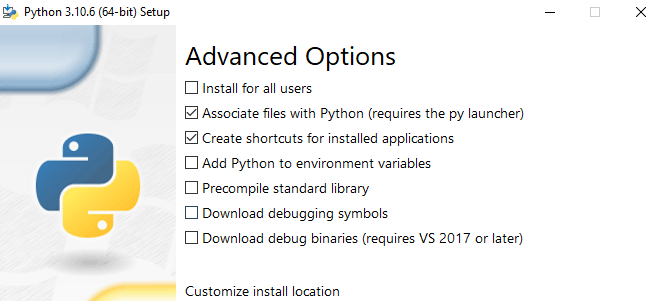

After extracting the WebUI files and doing the git clone, ive tried to double click webui-user.bat but the terminal that opens up says “python not found” but i’ve got python downloaded…

Not on Windows right now so I can’t confirm, but you probably forgot to pick “Add Python to PATH” or whatever the option is in the installer. Try running the Python installer again, maybe it’ll let you add it without needing to uninstall & reinstall

Edit: If you’re on Nvidia, there seems to be a simpler install method now: https://github.com/AUTOMATIC1111/stable-diffusion-webui/wiki/Install-and-Run-on-NVidia-GPUs (Method 1)

Hmm i uninstalled and reinstaqlled and i’m not seeing an option for “Add Python to PATH” Could there be an alternate name?

It’s probably “Add python to environment variables.” They must have changed the wording on that at some point.

Yea the webui and stable diffusion models/checkpoints are generally free to use.

This is a great guide and was really helpful when I decided to experiment to see how this works.

A couple of things that confused me when trying this out that might save the next person some time:

-

where to put models etc *.ckpt and *.safetensors files live in stanle-diffusion-webui/models/stable-diffudion These will automatically be loaded when you new start wrbui-usr.bat

-

how to change models This took me waaay longer to figure out than I’d like to admit. There’s a drop down top left of the webui to select the model after you restart

-

I find the range of models, loras, checkpoints, extensions etc overwhelming. Im still not sure exactly what each of these do and which ones I’d need. Eg: Whats a checkpoint for?

-

prompt writing is clearly a fine art and can drive you mad. For both 3&4 I found civitai.com/images to be a fantastic resource. Browse through the images for styles or images you like and most of them will have the resources used and generation data there to recreate it. I found this to be a great starting point, particularly for negative prompts.

-

deformity Deformed faces have mostly gone away for me by changing this webui setting: settings> face restoration > code former weight = 0 Just need to figure out hands and phantom limbs now…

-

Forgot to add, when experimenting with prompts. Use a fixed seed number so you can see how your prompt changes effect the image between each generation

It would be amazing to have a list of remote web hosts that just take a prompt and spit out an image. Some I know:

I remember a quick and uncomplicated site that gave access to some models and took a prompt. It wasn’t used too much and pretty fast if you selected a non default model. Sadly, I don’t remember the name but I miss it. It didn’t have a whole lot of bloated J’s framework in the frontend like the ones mentioned above.

I can also recommend https://undressclothes.ai, as it is quite new, has free creds and works fast.

Interesting model if you are into titfuck: https://civitai.com/models/7701/realistic-titfuck?modelVersionId=9042

Is everyone creating accounts with CivitAI to get these models?

I did, it’s definitely worth it

Why did mine get downvoted to oblivion

Did anyone manage to find out what model sites like anydream.xyz might be using? I have hard time emulating the style…

Anyone else use the OpenPose control net? I’m a little confused on the head portion and which control node is doing what. Every thing else about the editor makes sense and is intuitive. Do the outer most nodes on the head affect head tilt and rotation? And are the inner nodes eye placement? this seems to be the case but I’d rather not end up confused and mad when I’m inevitably wrong and their heads are backwards and eldritch abominations. lol

I recently came across Krita’s AI plugin. It’s pretty easy to install (especially if you have nVidia or ComfyUI already set up). And lets you easily fine tune your images.

I highly recommend using Forge UI. You get the speed of ComfyUi with the simplicity of Automatic1111 and some cool extra features.

For an excellent guide from beginning to doing advanced check out Olivio Sarikas on youtube. His has videos on controlnet, upscaling, training etc.

So what settings do y’all use when playing around with prompts, before you generate a few really nice HQ ones?

I use 600x800 (or 800x600) and 50 steps when experimenting with prompts. Then when i get one i like, I lock the seed, maybe do a few .01-.03 variations. Then lock the variation seed and turn on 2x HighRes Fix. That outputs a 1200x1600 that very closely looks like what I expected. (I’m using a 3090 with 24GB vram)

In my experiments, i found that doing quick and smaller tests with a low number of steps, then increasing it for the highres would change the output too much. I settled on this so that theres less options to toggle back and forth.

What’s your setup?

I tried to install sd webui, Installed python, git. When I run the run.bat Error keeps on coming AttributeError: module ‘OS’ has no attribute ‘statvfs’

Tried re installation of python with environment variable and max limit disable, still run into same attribute error. I am on windows 11, with nvidia Any pointers on what I am doing wrong