- cross-posted to:

- hackernews@derp.foo

- cross-posted to:

- hackernews@derp.foo

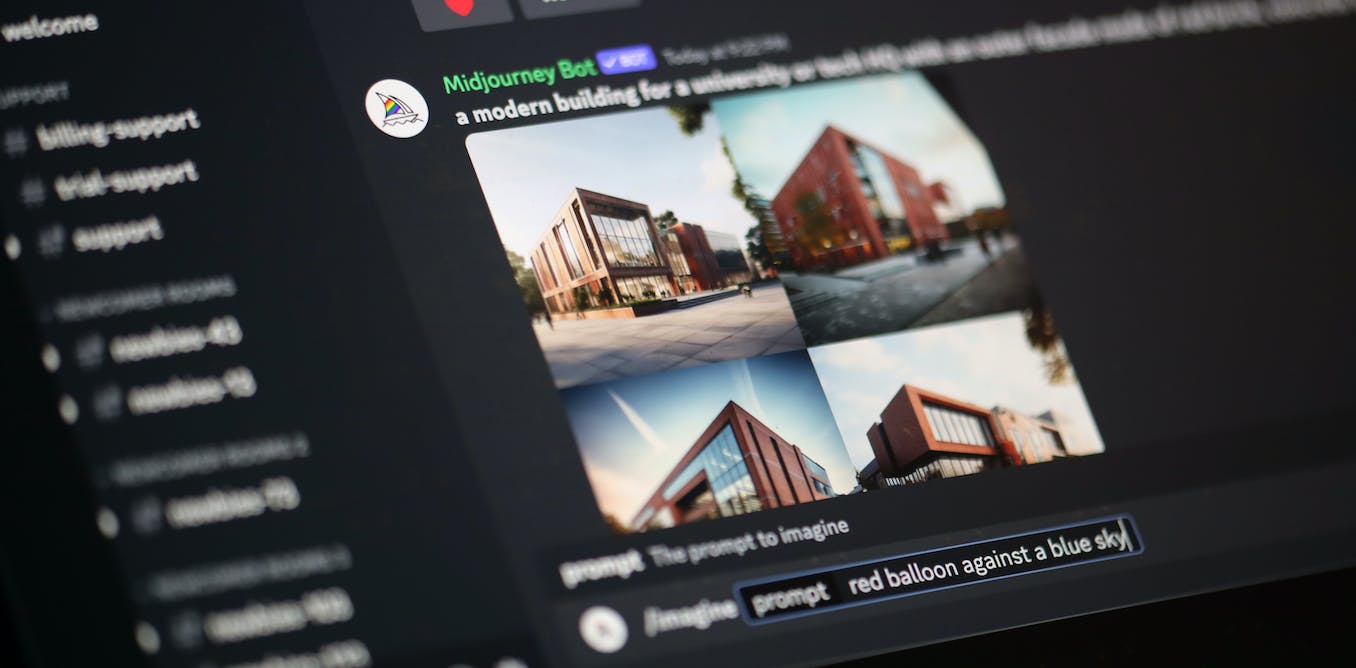

Data poisoning: how artists are sabotaging AI to take revenge on image generators::As AI developers indiscriminately suck up online content to train their models, artists are seeking ways to fight back.

Not even that, they can run the training dataset through a bulk image processor to undo it, because the way these things work makes them trivial to reverse. Anybody at home could undo this with GIMP and a second or two.

In other words, this is snake oil.