Apr 18, 2022 | Tarique Anwar Writes:

The main reason for ReLu being used is that it is simple, fast, and empirically it seems to work well.

But with the emergence of Transformer based models, different variants of activation functions and GLU have been experimented with and do seem to perform better. Some of them are:

- GeLU²

- Swish¹

- GLU³

- GEGLU⁴

- SwiGLU⁴

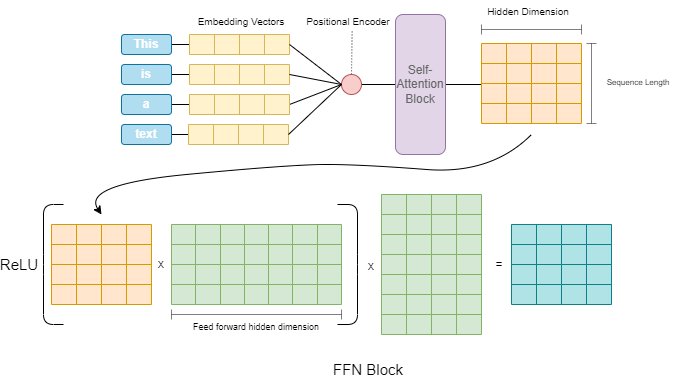

We will go over some of these in detail but before that let’s see where exactly are these activations utilized in a Transformer architecture.

Read Activation function and GLU variants for Transformer models

You must log in or # to comment.